VFX industry growth is extremely rapid. To the extent that in 2021 there’s virtually nothing that can’t be filmed – fantasy worlds, life of animals, space travels, even black holes – all of it has already been computer generated on screen. With every single year we have yet another accomplishment in the development of VFX technology, which we celebrate globally with awards and ecstatic YouTube reviews.

Trends in Visual Effects Industry

But think about it – how much cringe we get when we watch those same videos a few years later. Those same VFX trends that left us in awe, look too weird and too visible with time. This feeling we get is an undeniable proof that VFX is advancing at an extremely fast pace making the future of VFX full of new and awesome breakthroughs.

Digital De-Aging

In 2019, ‘The Irishman’ directed by Martin Scorsese made headlines with its revolutionary de-aging technology that turned 70-something actors young again. Top visual effects artists had to figure out how to rejuvenate Robert De Niro, Al Pacino, and Joe Pesci, so that the director could tell a gangster story spanning several decades.

It wasn’t the first attempt of moviemakers to use de-aging technology, though. They did it in ‘X-men’, they did it in ‘Benjamin Button’, ‘Blade Runner 2049’, and many other pieces. What made ‘The Irishman’ VFX so groundbreaking is the approach – Martin Scorsese was against tracking dots and “golf balls” on actors’ faces. So the VFX team spent 2 years developing technology and hardware that made it all possible.

How does it work?

There were several ways to perform de-aging processes in the history of cinematography. Pioneers of black and white cinema used red and green makeup in combination with red and green lens filters. Red shades of makeup were pretty much visible without filters, creating aged up appearances of actors – while red filters eradicated the shades completely making actors young and fresh again.

When we talk about digital de-aging in movies, though, makeup offers no help. Well, at least not on set. Facial capture system Mova Contour developed in 2007 requires actors to wear green fluorescent paint while sitting in a structure of camera arrays. Then, actors perform nearly 70 facial expressions for cameras to capture movements and positions of paint dots on their faces. Having all those details, VFX artists are then able to recreate actors’ appearance digitally – a mask of some sort. This mask is added on top of acting doubles that step in on set to play younger versions of main actors.

Scorsese refused to use doubles to act young De Niro and company. Instead, he and his VFX crew used a special camera rig that worked on IR technology to recognize actors’ face positions. The crew created a library of old footage from movies of the 70s, so that the camera with the help of IR and AI could quickly compare mimics of actors on set with their pictures within the library. The result was astonishing.

However, the effect of Uncanny Valley is still present in ‘The Irishman’. Many people attribute it to the fact that young De Niro, Al Pacino, and Pesci still move like 70-year-old men. Use of doubles surely could fix that a bit, and maybe even save some time and money.

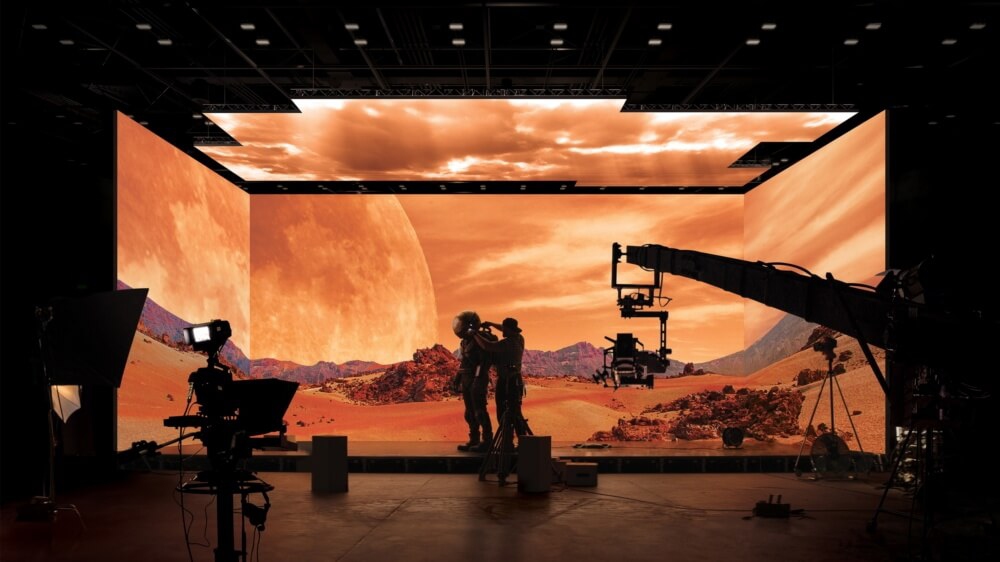

In-Camera VFX

What’s bad about the green screen? There are several answers to that. First, you have to manually adjust its position when there’s a need to change the landscape. Second, it causes green reflections on actors and objects around them. The reflection is not really visible to the eyes of spectators, but specialists spot it fast as a colour spill. Third, green messes up all lighting efforts. Especially when the crew tries to shoot outdoor scenes from within the studio building.

Solution comes from the video game industry and involves Unreal Engine – a 3D creation platform developed by Epic Games, a video game publisher. Instead of green and blue screens, filmmakers use LED-screens and project all necessary landscapes on it. This way all VFX that would be otherwise digitally created on top of green screens in post are available on set while filming. The imagery on the screen has parallax effect, so when the camera moves – the objects on LED change their angle as if they were real. And it’s all possible because of the gaming engine from Epic Games.

Universal Scene Description (USD)

VFX pipelines are extremely complex. They also sometimes require hundreds of artists working on the same frame from various corners of the world. It means terabytes of data that is being sent and exported – and it is crucial not to lose or confuse any of it.

_1024x0_d94.jpg)

Pixar’s Universal Scene Description was developed for the studio’s 3D animation to facilitate collaboration of artists. Pixar needed a format that would represent complex 3D scenes from their feature films allowing artists to work together in sync. In 2016, USD was released to the public as an open-source platform and was widely accepted not only by animators, but by 3D specialists across different industries also. For VFX specialists, architects, designers, USD is a sort of a digital library of elements that serve as a basis to build other things on top of and create objects in 3D.

The USD code is available on GitHub and can be integrated into third-party programs used for 3D rendering. That is why you can find countless Universal Scene Description tutorials on the Internet. Moreover, many companies like Epic Games, Autodesk, SideFX, Apple choose using USD to build their software around, so that it would be easier for them to represent three-dimensional scenes or scenes of augmented and virtual reality.

Deepfake

If digital de-aging technique requires a scan of an actor’s head to create a mask of their face, deepfake would only need several photos to do so. CGI face replacement is a popular tool that we usually see on Instagram rather than in professional moviemaking, but it is clear that we shouldn’t take it as mere fun.

Deepfakes are videos created from an existing footage where one person’s face is replaced with someone else’s. The name derives from a deep learning algorithm that trains neural network to gather data on facial muscle movements from photos and to recreate them replacing existing person’s likeness on the video. This technology caused a lot of stir after being used to exploit common citizens’ appearances and make porn or to commit financial fraud.

In VFX, deepfakes are not considered a widely used tool, even though they sometimes may achieve impressive results. For instance, the audience couldn’t cross the Uncanny Valley while watching ‘The Irishman’ stating that young depictions of actors are not believable and real enough. At the same time, compilation of scenes from the movie that used deepfake technology to rejuvenate De Niro, Al Pacino, and Pesci was praised by netizens for a more photorealistic image.